What would happen if we combined blogs and BitTorrent?

I believe we would have a system that is ideal for publishing and sharing media. Users would not be dependant on a specific host to hold and display their content. As long as it is important to some part of the community, the content will remain available. User’s nodes would act both as a reader (via a web browser or dedicated client) and publisher of content. This would be a true level playing field where anyone can contribute to the sum total of content and knowledge with loss of content and media important to the community when sites (nodes) go off-line.

I am certain this is not the first time I have expressed an idea based around peer-to-peer blogging and document distribution. Even so, I’d like to take a moment to explore the possibility of a decentralised social blog like ecosystem not dependant on the source remaining online.

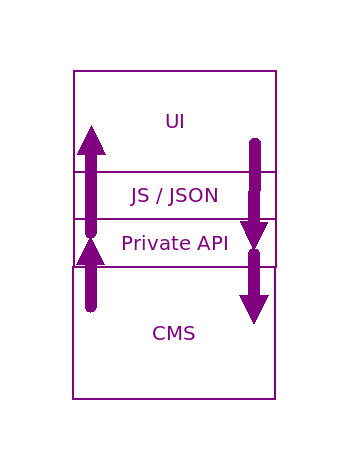

Classic CMS

First, let us look at the basic model of your classic CMS.

You classic CMS architecture (be that WordPress, Facebook, or Tumblr looks something like the diagram to the right.

The model exists on two layers – the client side with HTML (the UI) and JavaScript (the UI logic) which speaks to the API, usually via JSON. The API acts as the gatekeeper to the CMS itself. With WordPress this CMS sends the HTML page to the reader on request. With Facebook, a core UI loads and then pulls the content via the API. However, that API is closed. Private if you will. Usually, this private connection is protected by session cookies and techniques like NONCE (number used once).

With this set up, (screen scrappers aside), one website holds and displays the content. If the site goes offline or is taken down, the content is gone.

However, I believe there is a bridge between site restricted publishing and an open social graph of information sharing.

Torrent based publishing: The benefits

There are a number of potential benefits from building a decentralised social system. Some of these are drawn from implications of the abstract (see lower down the page).

Each node in the social graph would cache a copy of the published item able to pass it on to other nodes that express an interest. Instead of one privately owned social network. Anyone can set up and run their own outpost in a wider free social community.

Each node in the network could act as a mirror for content allowing large media to be streamed from smaller publishers that might not be able to afford such bandwidth demands.

Scales with use

Unlike a regular website which, when popular, can slow down with a sudden rush of traffic, peer shared content would become faster and more readily available with the increase in users interacting with it.

Thus lage video media could be streamed from sites that might not otherwise be able to support large number of concurrent users.

Censorship proof

It would be very hard to censor content once it is released into the wild. Thus, in restrictive regimes news of atrocities can be released and spread beyond the control of any one government.

The downside of that your content cannot be censored – even by you. Once you hit publish, your content will be out there for better or worse for as long as any node in the ecosystem sees fit to hold a copy.

Comment on anything

Any content released in this format can be subject to comments (reactions). The “related” field is there so that a reader can display not only your reaction but the content to which you are reacting.

Comments, reactions, and discussion arround your content serves to propel it further afield.

Control your own social graph

While it would not be possible to restrict who sees your content, you can control which actors you pull content from and to whom you give first line access in turn.

Your social graph is yours to control. And, assuming some relatively basic security precautions, your viewing habits are hard to impossible to track.

Data Privacy

With a system such as this, there is no big corporation selling your data for profit. While the items you create content about will, by definition, be public. It is only public when or if you chose to make it public. What you passively consume is, by and large, your own business.

Share anything

As this would be a censorship free, unregulated, social ecosystem, there can be no limits on what you could share. As long as you find interested readers willing to cache your content within their node-space, your work will remain long after the original is gone.

Torrent based publishing: The drawbacks

This would not be without its own drawbacks.

Fake news and propaganda

One of the side effects of an unregulated and free social ecosystem is that there would be no larger overlord to curtail fake news and divisive propaganda. This could allow a rise in pernicious content. Only the maturity, sensibilities, and selectiveness of the wider community would in any way raign in both untrue and manipulative content. To an extent this is already true in many forms of social websites with little or no control or limits on harmful content.

The only solution to this would be solid state-wide education in all participating countries.

There is no taking it back

Once you release or publish a piece of media, there is little you can do to take it back. As soon as another user caches your content, your control is gone. The very act of publishing is, more than it is now, an act of relinquishing control.

This drawback should be mitigated by mature and considered use. Specifically, publish in hast, repent at leisure. In other words, give yourself time to cool off before you hit publish.

Implied limits of copyright

By publishing into a peer-to-peer environment, you are by implication, surrendering some degree of copyright. It would be implied that you give consent to have your content displayed in places other than those under your control.

It would have to go without saying that, if you release content this way, you grant all required permissions for your content to be stored and shared. This is not so different to sharing content on other forms of social media except that you would be granting the right to the community rather than a single corporation.

Copyright abuse

This system would, like BitTorrent, be potentially open to copyright abuse – the sharing of content illegally.

Such abuses would be no harder but no easier to tackle than BitTorrent illegal content is now.

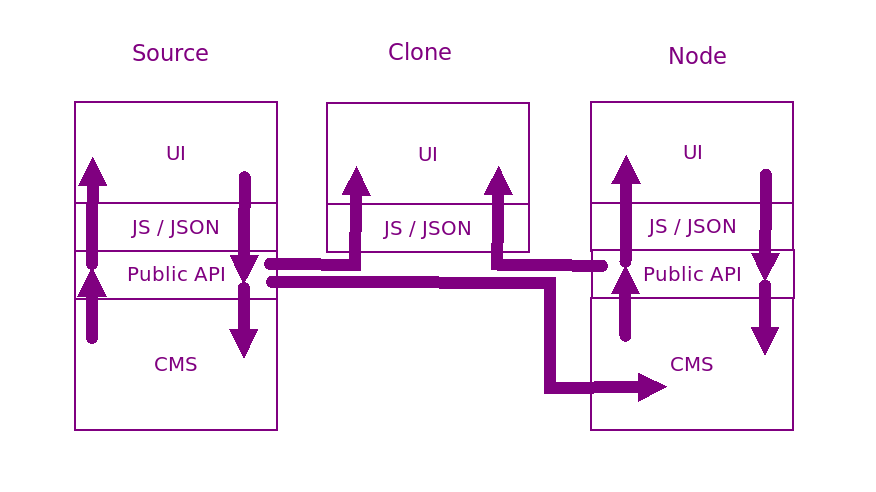

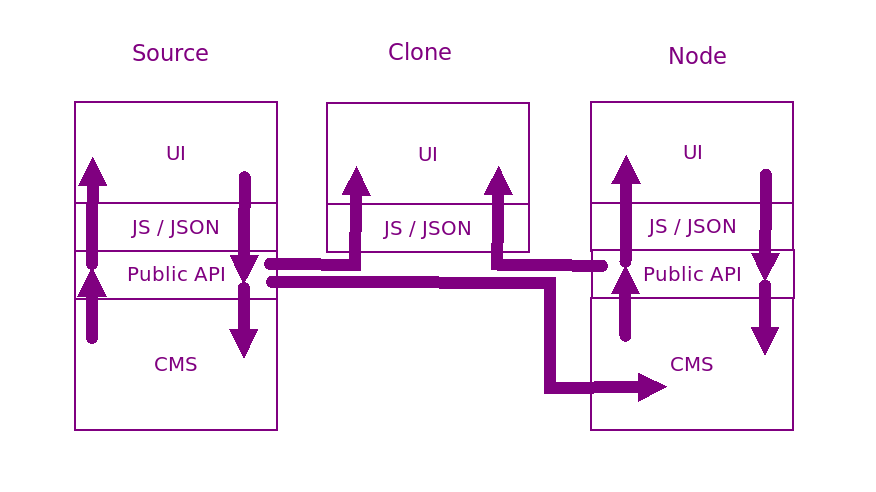

Torrent based publishing: The abstract

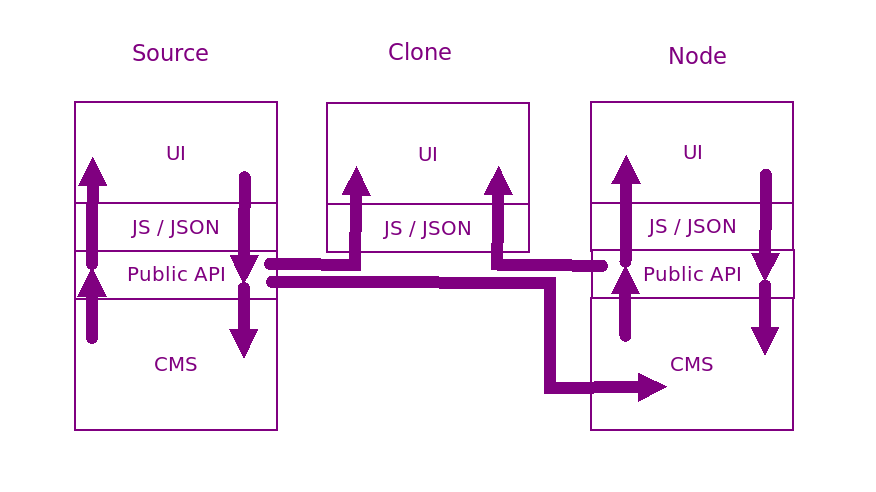

Now that we have looked at the pros and cons of such an ecosystem, lets look at the technical side of things. A simple change to your classic CMS architecture would enable this to be created. A simple step of opening up the API layer would allow for multi site viewing of content.

I’ve labeled these “source” where the work was first released, “clone” where it is simply viewed, and “node” where the content is peer-hosted.

Add to that SHA-256 digest as a signature and every item posted into the ecosystem can be uniquely identified and verified as unchanged. This, as it happens, would allow such an API to be broadly compatible with the BitTorrent protocol.

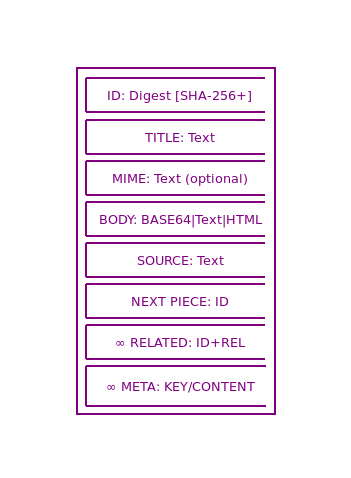

Allow me to talk you through my proposed data structure and the way in which it maintains a degree of compatibility with the core concepts of BitTorrent.

- ID Digest

- Title

- MIME

- Body

- Source

- Next Piece

- Related

- Meta

ID

I have already touched on the SHA-256 ID which would be a way by which nodes could request content from their peers. This by necessity implies peer-nodes that your private instance interacts with. This might imply a case of following other nodes in much the same way we can subscribe to blog feeds today. These first-level contacts would be your first port of contact in seeking specific content.

The hash digest of the rest of the content would ensure that you are receiving an untampered with copy of the content.

Title

Title is, I hope, self-explanatory. It is what the author called the content. For a document (say a blog post) this would be the headline. For a reply maybe something like, “Re: Your new headline”.

Mime

Mime would allow an indicator of the formatting of the main content.As this is likely to be passed by JSON and/or XML non-text content could be Base64 encoded to remain text safe. Furthermore, with the “Next Piece” hash, a large file could be broken into many parts (as is done with a torrent). I imagine that a parent item holding the parts in the “Meta” section would act as the point of reference.

By allowing all forms of content, high bandwidth items such as video, could be shared without appreciable bandwidth drain as pieces could be obtained from multiple peers. In this regard,the system would be functionally identical to BitTorrent.

Body

Body is where the shared content resides. It would be down to the community to decided what formats they are willing to consume and share but I suggest that text and some limited subset of HTML (to prevent cross site scripting attacks), along with MarkDown, or similar would be the norm for readable content.

Source

Source is a text only field that I envisage being used as a place where the originating author can insert their own details. As well as, perhaps, details of the originating node.

This is in part inspired by the way torrent creators often like to insert a text file or readme about the torrent. One like to receive credit and this is what this field offers.

Next Piece

In a chain of linearly connected content – for example, a video broken into pieces obtained from peers non-sequentially – the next piece is simply the hash or magnet for the next sequential file.

Additionally, a work, such as a novel could be divided into chapters using this field. This simply is a way to direct the player, display UI, or caching server information enough to show the next part. Which may prove helpful if streaming from peers.

Related

Related is a key piece of metadata to allow a decentralised interaction. There can be none, one, or many related links. It exists as a pair of values – the id and the rel in the same way that a semantic link has both a href (id) and a rel value. The possible content of rel is left undefined so as to allow its use to be developed by consensus.

This allows a comment to be part of a threaded discussion, in response to one or more items, or simply to place content within a wider context. Related would be where serialised works could acknowledge the media they follow on from.

Meta

Finally, there is meta. This is here for whatever ways the community may find to expand on the basics or to provide additional data about the content.

Some possible meta values could include:

- Description

- Abstract (for a paper)

- Author(s)

- Contact

- Genre

- Age rating

- Category

- Tags

- Open graph element

While the format of meta elements is specifically left undefined, I nevertheless imagine it would be used largely as property and content as a HTML meta tag might be.

Conclusion

All that remains is to define a series of REST based API methods that nodes and clones can use to request, discover, and subscribe to sources of media. All of the technology exists already. XML, JSON, REST, BitTorrent, Magnet links, URIs, and content management systems.

My question to you is this – could peer based content and media be the next step for social media?

Ages ago, I suggested that we should combine blogs and BitTorrent. I drew diagrams and everything.

https://matthewdbrown.authorbuzz.co.uk/lordmatt/news-and-updates/bittorrent-based-publishing/

I should point out that ActivityPub does pretty much the same thing (more or less) and is already well established and functional.